How are the US and Israel using artificial intelligence in attacks on Iran?

The narratives of “ethics” and “good AI” by technology monopolies are collapsing as their organic ties with the military-industrial complex are exposed. “Big data” systems and language models, marketed with the claim of facilitating daily life, actually operate directly as a fundamental component of the imperialist war industry. This colossal computational power, built through the appropriation of social labor and collective knowledge, no longer remains confined to the boundaries of a commercial product. Through the structural integration achieved with the Pentagon and the Israeli military, this productive force transforms into a destructive power by becoming the primary infrastructure of colonial/imperialist doctrines of violence and targeting operations.

The imperialist attack by the US and Israel against Iran reveals the de facto operation of these systems on the ground in all its clarity. The data processing power before us does not function as a passive auxiliary software attached to the attack apparatus from the outside. On the contrary, it is positioned as the main mechanism that directly settles into the chain of command, processing intelligence and taking over targeting decisions.

The stages of the attack: From cyber networks to firepower

The wave of attacks against Iran began on the night of February 28, 2026, with interventions targeting the country’s digital networks. According to the operational timeline announced to the press by US Chairman of the Joint Chiefs of Staff Dan Caine on March 2, the US Cyber Command and Space Command stepped in hours before the physical destruction. Long before the first missiles found their targets, a comprehensive cyberattack was launched, disabling Iran's communication infrastructure, radar networks, and air defense sensors. By the morning of March 1, platforms such as the BadeSaba religious calendar application, which has more than 5 million downloads, were hacked, and psychological warfare messages themed “time of reckoning” were broadcast nationwide. The goal was to disable Iran’s capacity to communicate and perceive danger in the very first seconds through software moves.

Immediately after the communication network was collapsed in the hours connecting February 28 to March 1, operational artificial intelligence systems were placed at the center of the operation. Millions of data points from spy satellites, unmanned aerial vehicles (UAVs), ground radars, and intelligence agents on the field flowed into the US Central Command (CENTCOM) headquarters in a single digital stream. Integrated systems like Project Maven and Anthropic’s language models synthesized this massive pile of data in minutes. The system automatically mapped moving objects, clusters of military vehicles, fuel depots, communication nodes, and “suspicious” structures, presenting a prioritized strike list to the command echelon.

This operational list, processed by software, was transferred to the firepower on the ground within minutes as of March 1, 2026. In the final stage, fighter jets, cruise missiles, and other platforms were activated. The fact that more than 1000 targets were struck in just the first 24 hours of the operation proves that this was not merely an intense aerial bombardment. The picture before us clearly shows that the firing rhythm was coordinated much faster and through a targeting layout operating entirely on machine logic.

Software authority narrowing the decision time

The command echelon that personally directed the attack also confirms this extraordinary speed and technical infrastructure established in the first phase of the operation. According to statements reflected in the press on March 11, 2026, CENTCOM Commander Brad Cooper states that the military deployed various advanced AI tools on the battlefield and that these tools processed massive data sets in seconds, accelerating the decision-making process to an unprecedented degree. The time between the steps of finding, fixing, and finishing the target—referred to in military terminology as the “kill chain”—is significantly compressed due to these software programs. The task of scanning satellite imagery and decoding intelligence, which previously took months, is reduced to a few minutes. Critical decisions such as which structure to strike first, which weapon to allocate to which, or how to calculate the risk of civilian casualties are made entirely within the speed of this digital flow. In other words, the machine does not merely operate as an archive storing information; it takes on an active role by shaping maneuver options, shortening firing times, and automating the destruction process.

The defense bureaucracy’s argument that “the final decision always rests with the human” largely turns into a formal guarantee in the accelerated operation on the ground. Even if this setup does not work as an autonomous robot army replacing soldiers, it completely captures the essence of the decision and initiative. Because by the time it reaches the soldier who presses the “approve” button on the screen, automated systems have already decided which data to highlight, which structure to consider a threat, which movement to find suspicious, and which target to strike first. The human agent often assumes the role of a bureaucratic figure who unquestioningly approves the picture presented to them. The interface presented to them consists only of data filtered by the software, detached from the operational context, geographical reality, and historical background.

The fact that machine authority has become so decisive in command structures does not reflect a mere pursuit of military efficiency. US imperialism is trying to overcome its hegemony crisis and declining influence by deploying its technological superiority as violence onto the field at an unprecedented speed. Reducing the time between finding and striking a target to seconds relies on the reflex of establishing absolute planetary domination, giving the opposing side no reaction or defense time against the imperialist intervention.

The Minab Primary School attack: The consequences of accelerated targeting

The increase in operational speed nullifies the bureaucratic objection time and eliminates the possibility of cross-verifying intelligence. As such, the consequences of the slightest deviation in data sets, an old, un-updated satellite image, or a mathematical bias leaked into the codes reach an extremely severe scale. The disastrous possibilities created by the accelerated “kill chain” have not remained an abstract scenario. The US attack on the Shajarat al-Tayyibah Primary School in the city of Minab, Iran, on February 28, 2026, constitutes one of the most tragic examples of the structural flaws of AI-supported systems.

This attack, in which at least 175 people, mostly children, lost their lives, necessitated a deep investigation into how the targets were technically determined. According to the preliminary investigation findings supported by The New York Times published on March 11, 2026, CENTCOM relied on old and un-updated coordinates located in the Defense Intelligence Agency (DIA) database when striking this point. The school building in question was previously part of a military base belonging to the Islamic Revolutionary Guard Corps Navy. However, visual inspections clearly reveal that between 2013 and 2016, this building was separated from the military area by wire fences, its watchtowers were dismantled, its walls were painted, and a playground was added to its courtyard. Yet, in the comprehensive database, this place continued to be coded as a valid “military target” even years later.

As the hour of the attack approached, Project Maven (and likely Anthropic’s Claude language model integrated into this system), supported by the National Geospatial-Intelligence Agency (NGA), was in operation. The system ceaselessly scanned hundreds of thousands of images and texts to identify “points of interest” on the ground. Military officials claim that the disaster stemmed not directly from computing technologies, but from the failure of personnel to double-check old data within the extraordinary speed brought about by the war. But this is exactly where the real problem lies: The system produces targets so rapidly and in such large numbers that the human element in between turns into a dysfunctional intermediary who merely presses the approval button. As the tempo of war increases, human verification processes collapse, and a few lines of un-updated data lead a Tomahawk cruise missile to strike a primary school. Although the Trump administration tried to obfuscate the responsibility for the attack in the early days, and Trump baselessly suggested that the school might have been struck by Iran, Tomahawk fragments found on site and military investigation findings clarify that the targeting infrastructure used directly by the US military is responsible.

The software center of the war: Palantir and Project Maven

At the center of this massive targeting structure lies the technology company Palantir. The role played by the company is glossed over in the public eye with a harmless expression like “defense software provider,” as preferred by the mainstream media. However, the operation on the ground involves delegating military command authority to private company technologies. Palantir builds the main platform that converts raw data flowing from the field into a target list. According to a March 9, 2026, Steve Feinberg letter seen by Reuters, the Maven system was elevated to the status of an official program of record.

This decision documents that the software has ceased to be a temporary technological experiment and has become one of the US military’s permanent and most fundamental decision-making tools. In the same official document, it is stated that Maven parsed battlefield data, determined targets via data, and was used directly in thousands of targeted missile strikes conducted against Iran. The fact that the task of battlefield surveillance was taken from traditional institutions like the National Geospatial-Intelligence Agency and handed directly to the Pentagon’s Chief Digital and Artificial Intelligence Office shows that what occurred was not just a profitable software contract, but a comprehensive transformation in the military's institutional structure.

The working logic of the Maven system draws the absolute limit reached by automation in the field. The software merges data in various formats coming from spy satellites in space, drone sensors in the air, radars on the ground, and intelligence reports onto a single screen through data fusion and deep learning algorithms. Then, it automatically labels the structures it codes as enemy elements. In a demonstration held at a Palantir event in March 2026, a Pentagon official explained how Maven was used for targeting in the Middle East and showcased the system’s accelerated assessment capacity accompanied by heat maps. In these presentations, it was emphasized that the process, which previously required hours of work by dozens of analysts, could now be handled in seconds. All these demonstrations go beyond being a simple product advertisement; right in the middle of an active war, exactly while the Iranian geography is under fire, they signify the institutionalization of a technology company as an essential element of the war.

Palantir’s function is not limited to storing data or providing an interface. It is known that the platform established by the company, after visualizing and analyzing potential targets, can automatically nominate them for ground or air strikes. The system provides direct tactical recommendations to the military command on which aircraft should take off, which munition would create a higher impact on that geographical structure, and how to approach the target. In a published system demonstration, the user asks the system what the suspicious activity in the region is; the system analyzes the probable troop structure in seconds, then generates alternative operational options, advance routes, and steps to disrupt the enemy's communication. Here, the position of the software ceases to be an auxiliary analysis tool; it takes on a structure that determines target priority, the military’s maneuver direction, and the distribution of bombs to be dropped, directly shaping the operational domain.

How the technology company positions itself is also important for understanding the structural dimension of the issue. Information relayed from the company’s own events shows that revenues obtained from the government are increasing at an unprecedented rate, that selling technology to militaries sits at the very center of the company’s identity, and that due to the active conflicts in Iran, the priority of all software teams has been updated to “directly support troops on the frontline.” It is no coincidence that Shyam Sankar, one of the company’s highest-ranking technology executives (CTO), simultaneously serves as a lieutenant colonel in the US Army. A company executive producing war technology being directly involved in the military structure using that technology proves that personnel, corporate language, and operational priorities are completely intertwined. On the contract level, the numbers are constantly multiplying. A contract worth up to $480 million was signed just in 2024 for the Maven project, and the contract ceiling was raised to $1.3 billion in 2025. Palantir is now in the position of a core manager in the Pentagon's modernization process.

Deploying large language models to the front: Anthropic and Claude

The administrative dispute between the Pentagon and Anthropic, a company that stands out with human rights discourses in its integration process with the war apparatus, serves as an ideological discourse function covering up the reality on the ground. At first glance, the public got the impression that there was a principled conflict between a “technology company that sets limits” and a “state that knows no bounds.” The reality of the incident, however, fits into a much narrower legal framework. Anthropic did not want the Pentagon to use its model named Claude for mass domestic surveillance targeting US citizens and fully autonomous weapon systems that do not require human approval. The Pentagon rejected this restriction and officially declared the company a supply chain risk on March 5, 2026.

These administrative labeling and banning discourses did not mean that the Pentagon abandoned the use of Claude in operations in Iran. On the contrary, it has been documented that this model continued to be used on the frontline in intelligence analysis, synthesizing text data coming from the field, and operational planning. When delving into the technical depth of the matter, the situation becomes even clearer. The Claude large language model was already operating integrated into Palantir’s Maven system via APIs. According to leaked reports, numerous command and intelligence workflows on the Maven platform were built directly upon Anthropic’s code infrastructure. Radio conversations and Persian leak texts from Iranian elements whose communications were intercepted were instantly translated into English and turned into military summaries through these language models.

The model produced by the company functioned not as an independent research tool connected from outside the war chain, but as a processing center situated directly inside the command architecture. The visual layer that mathematically parsed satellite imagery and radar data, and the language model that read and synthesized leak reports from the field, fused within a single targeting interface. Even after the Pentagon’s sanction decision, it was understood that it would take weeks for Palantir to rip this code from the heart of the system and replace it with another infrastructure. This situation proves how structural a position large language models have settled into in processing data on the frontline.

Colonial technology transfer: From Palestine to Iran

It must be emphasized that these technologies, which strike thousands of targets in seconds, did not emerge in a vacuum, but are the product of a specific colonial experience and military laboratory process. Much of the targeting systems and automated command logic that the US military uses on a massive scale in Iran today were bloodily tested on real targets in Palestinian territories for decades, and recently in Lebanon, by the occupying and genocidal Israeli forces.

By using targeting systems such as Habsora (The Gospel) and Lavender in Gaza operations, the Israeli military automatically marked buildings where tens of thousands of people lived and carried out different types of attacks. The Israeli military technology industry directly earned the title “field-tested” for the surveillance and targeting software it produces over the bodies of Palestinians. Palestine functions as a closed testing ground where these new generation weapons and data integrations are trialed before entering the global market. These systems, which reduce human life in Palestine to simple communication data (WhatsApp group membership, location data, etc.), code casualties as a mathematical threshold and cost calculation.

The technological infrastructure established there and tested on Palestinians combines with the cloud contracts of technology monopolies (such as Project Nimbus run by Google and Amazon) to transform into the main targeting logic in the US operations in Iran today. For many years, Israel has been using technology as a weapon to maintain its own occupation, while also placing the data sets and practices of violence obtained from this test field at the service of the US’s regional military objectives. Technology transfer, in this context, directly symbolizes the transfer of the occupation experience and colonial reason.

Dismantling the imperialist war apparatus

The US and Israel do not use technology monopolies in their military operations as ordinary suppliers providing software from the outside, but as the main determinant of the decision-making and command chain. While systems that turn data into a target bank via Project Maven become the core programs of the war, language models like Claude are integrated into intelligence layers, and Silicon Valley capital undertakes this massive computational load. The codes produced and the infrastructures established remove the companies in question (and other major technology monopolies) from being spectators of the process and transform them into one of the main actors of the imperialist intervention and destruction on the ground.

Faced with this picture, calling on technology companies to adhere to “ethical rules” or demanding to make artificial intelligence more transparent means missing the essence of the matter. Security protocols squeezed into corporate charters vanish in a second when the military objectives of the Pentagon or Israel are involved. There is no possibility of “humanizing” or improving a system whose fundamental purpose is to automate imperialist domination and to keep targeted geographies under control by depopulating them. We cannot neutralize a war machine that calculates the coordinates of missiles raining down on people worldwide, the speed of the wind, and the margin of error of the massacre with a few lines of code. The task before us is not to demand the regulation of algorithms; it requires organizing a political objection that will radically transform the property relations that bring those algorithms into existence and the imperialist war apparatus they serve. (DS/VC/VK)

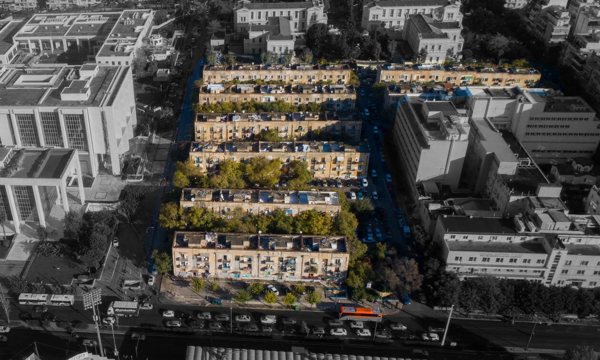

‘We will not give up a single inch of ground’: Prosfygika’s resistance against eviction

Spiros Grammenos: ‘I sing so memory won’t fade’

Larry Lohmann: AI is way too incoherent a gambit

Thaura.ai: An AI model against big tech domination

Resistance to digital colonialism in Palestine